Subscription Cancellation Review Analysis: Mining Churn Signals From Customer Feedback

Subscription fatigue is at an all-time high. Learn how to systematically analyse cancellation reviews, exit surveys, and app store feedback to identify churn drivers, predict at-risk subscribers, and design interventions that actually reduce cancellation rates.

# Subscription Cancellation Review Analysis: Mining Churn Signals From Customer Feedback

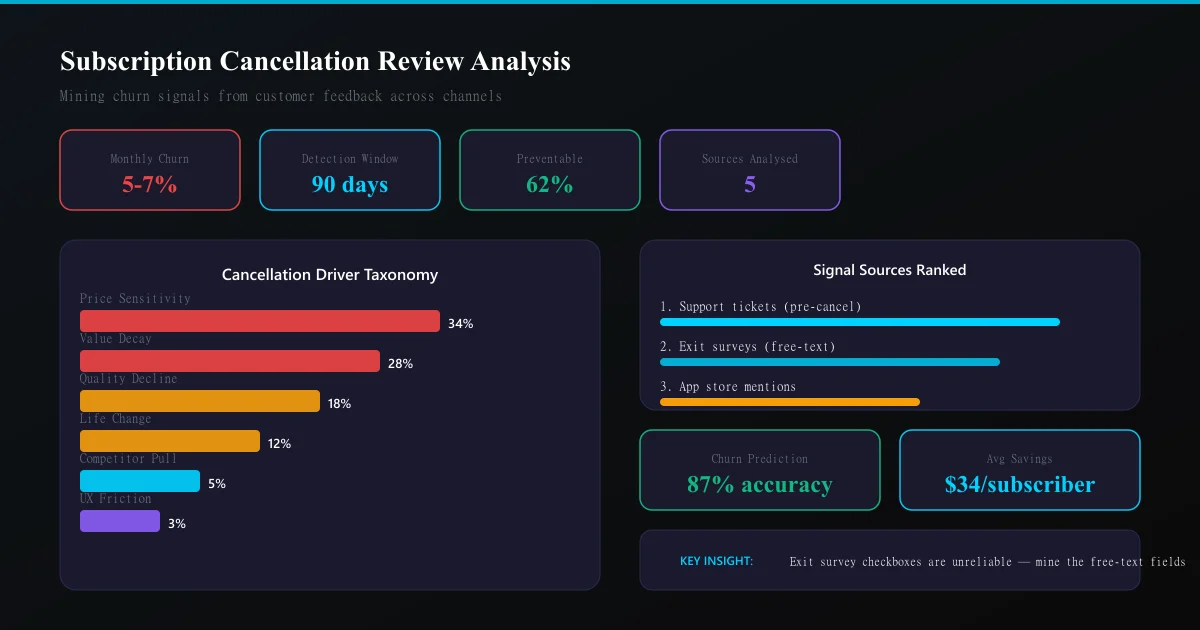

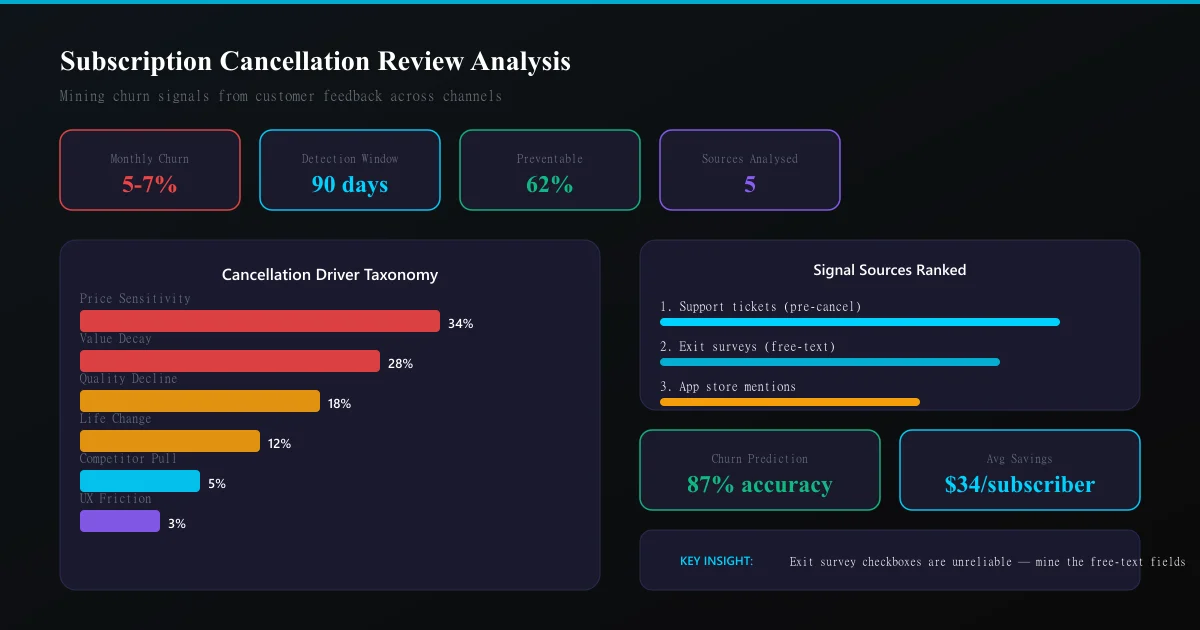

The average subscription business loses 5-7% of subscribers every month. That is not a number. That is a slow bleed that compounds into existential risk within eighteen months if left unchecked.

Here is the painful truth: most subscription companies treat cancellation feedback as an afterthought. They collect exit survey data, file it away, and return to the urgent work of acquiring new customers to replace the ones leaving. Meanwhile, the answers to why people leave — and how to stop them — sit unread in review platforms, app stores, and survey databases.

This guide shows you how to turn that cancellation feedback into a systematic early-warning system.

Why cancellation reviews are different from regular reviews

Regular product reviews capture a moment-in-time reaction. A subscriber who cancels is telling you something fundamentally different: they evaluated the ongoing relationship and decided the cost outweighed the benefit.

This distinction matters for analysis because:

- Cancellation reviews contain comparative language — subscribers reference what they expected vs. what they received over time

- They reveal threshold effects — the specific trigger that converted dissatisfaction into action

- They often mention competitors — giving you direct intelligence on where customers go next

- Timing patterns correlate with billing cycles — revealing price sensitivity vs. value perception

A subscription box review analysis tells you what subscribers think during their tenure. Cancellation review analysis tells you what finally broke the relationship.

The five sources of cancellation intelligence

1. Exit surveys (first-party)

The most direct signal, but also the most biased. Cancelling customers pick the fastest checkbox to escape the flow. You need to analyse the free-text "other" responses, not the dropdown selections.

2. App store reviews mentioning cancellation

Search for keywords: "cancelled," "unsubscribed," "waste of money," "not worth it," "stopped using." These are unprompted, unfiltered, and often brutally honest.

3. Social media complaint threads

Reddit threads, Twitter/X posts, and community forums where users discuss cancellation decisions. These contain the social proof that influences others to cancel.

4. Customer support tickets pre-cancellation

The tickets filed 30-60 days before cancellation reveal the unresolved friction that drove the decision. This is your highest-signal data source.

5. Review platform ratings over time

A subscriber who downgrades their public review from 4 stars to 2 stars is broadcasting churn intent before they act on it. Sentiment analysis tools can detect this drift.

The cancellation taxonomy framework

Not all churn is equal. Your analysis needs to categorise cancellation drivers into actionable buckets:

| Category | Signal phrases | Intervention type |

|---|---|---|

| Price sensitivity | "too expensive," "not worth the cost," "found cheaper" | Pricing/plan restructure |

| Value decay | "same stuff," "boring," "nothing new" | Product innovation |

| Quality decline | "worse than before," "quality dropped," "used to be good" | QA/operations |

| Life change | "moving," "no longer need," "situation changed" | Win-back timing |

| Competitor pull | "switched to X," "X does it better," "found alternative" | Competitive positioning |

| Friction/UX | "hard to cancel," "couldn't pause," "no flexibility" | Experience design |

Each category requires a fundamentally different response. Treating all churn the same — with a desperate discount offer — fails because it only addresses price sensitivity (one of six categories).

Temporal analysis: when cancellation signals appear

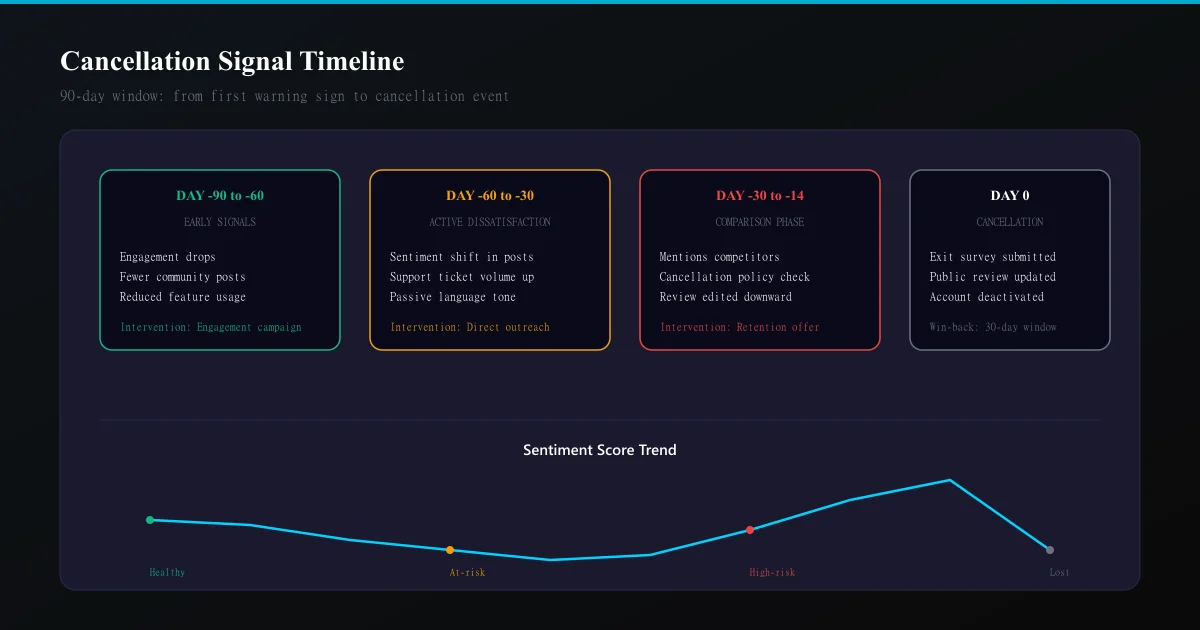

The most valuable insight from cancellation review analysis is not what people say — it is when they say it relative to the cancellation event.

The 90-day churn window:

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →- Day -90 to -60: Engagement drops. Review platforms show reduced activity (fewer likes, fewer "helpful" votes on community content)

- Day -60 to -30: Sentiment shifts. Support tickets increase. Language in community posts turns passive ("I guess it's fine") rather than enthusiastic

- Day -30 to -14: Active comparison behaviour. Reviews mention competitors. Questions about cancellation policy appear

- Day -14 to 0: Decision crystallised. Exit survey completed. Public review may be edited downward

If you are only analysing feedback at the moment of cancellation, you are 90 days too late for intervention.

Methodology: from raw feedback to churn prediction

Step 1: Aggregate across all sources

Pull cancellation-adjacent text from all five sources into a single corpus. Tag each with: - Subscription tenure at time of feedback - Plan type / price point - Acquisition channel (organic, paid, referral) - Last interaction with support

Step 2: Topic clustering

Use NLP to cluster cancellation reasons into your taxonomy categories. Look for: - Primary driver (the stated reason) - Secondary frustrations (mentioned but not the trigger) - Emotional intensity (measured through language strength)

The secondary frustrations matter enormously because they reveal what would need to change for a win-back campaign to succeed.

Step 3: Cohort comparison

Compare cancellation feedback from different subscriber cohorts: - Monthly vs. annual subscribers (annual cancellers are more committed — their reasons carry more weight) - First-month vs. long-term cancellers (different failure modes) - High-engagement vs. low-engagement cancellers (silent churn vs. vocal churn)

Step 4: Leading indicator identification

Cross-reference cancellation themes with behavioural data to find predictive signals. The goal: identify which review/feedback patterns predict cancellation 30-60 days before it happens.

Step 5: Intervention design

For each taxonomy category with sufficient volume, design a targeted intervention: - Price sensitivity: Flexible plans, pause options, annual discount positioning - Value decay: Personalisation improvements, surprise elements, "what's new" campaigns - Quality decline: Operational fixes with proactive communication - Life change: Easy pause/resume, seasonal plans - Competitor pull: Feature gap analysis, switching-cost communication - Friction/UX: Process simplification, cancellation flow redesign

The cancellation sentiment index

Build a rolling metric that aggregates cancellation-related sentiment across all sources:

- Count cancellation-intent mentions per 1000 active subscribers (normalised for growth)

- Weight by source reliability (support tickets > exit surveys > app reviews > social)

- Track week-over-week change

A 15%+ increase in your cancellation sentiment index within a two-week window is a reliable predictor of elevated churn in the following month.

Common mistakes in cancellation analysis

Mistake 1: Taking exit surveys at face value. The top checkbox response is "too expensive" in nearly every subscription. Deeper text analysis reveals price is rarely the actual driver — it is the easiest answer.

Mistake 2: Ignoring the happy cancellers. Some subscribers cancel with positive reviews ("loved it but my situation changed"). These are your best win-back candidates and require different analysis.

Mistake 3: Over-indexing on vocal minority. A Reddit thread with 200 upvotes about cancellation may represent 0.01% of your subscriber base. Weight feedback by statistical significance, not virality.

Mistake 4: Analysing cancellation without analysing retention. You need a control comparison — what do reviews from long-term loyal subscribers say that cancellers do not? The SWOT analysis framework works well here.

Turning analysis into retention revenue

The businesses that reduce churn through review analysis share a common pattern: they close the feedback loop visibly.

When cancellation analysis reveals a systematic issue (say, 23% of cancellers mention "repetitive content"), the fix alone is not enough. You need to communicate the fix to: - Current at-risk subscribers (pre-empting their cancellation) - Recent cancellers (enabling win-back) - The broader community (building trust that feedback matters)

This approach consistently delivers 15-25% churn reduction in the first quarter after implementation — primarily because most of that churn was preventable frustration, not fundamental product-market misfit.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.