Real Estate Agent Review Analysis: Evaluating Property Managers and Real Estate Professionals Through Client Feedback

Real estate transactions are high-stakes, high-dollar interactions with long-term consequences. Learn how to systematically analyse reviews of agents, brokers, and property managers on Google, Zillow, and Yelp to assess transaction quality, communication reliability, and hidden red flags in the market.

# Real Estate Agent Review Analysis: Evaluating Property Managers and Real Estate Professionals Through Client Feedback

Real estate professionals operate in a market where information asymmetry is endemic. Buyers and sellers hire agents based on personal referrals, local brand presence, and sign-board visibility. They do not systematically analyse client feedback about transaction quality, communication, negotiation skill, or ethical conduct.

Yet reviews exist on Google, Zillow, Yelp, and agent-specific platforms. They reveal patterns: agents who miss deadlines, agents who pressure clients into bad deals, agents who disappear after closing, and agents who ethically guide clients through complex decisions. The data is public. Few use it.

This guide shows you how to analyse real estate professional reviews like you would service provider feedback in any other market, and what those reviews reveal about agent and broker quality.

Why real estate reviews are structurally unique

Real estate feedback has distinct properties:

1. Transaction complexity masks process quality

A buyer closing on their dream home at a 10% gain rates their agent 5 stars even if the agent missed an inspection deadline, failed to negotiate repair costs, and ghosted for three weeks. The outcome (home purchased, money made) overwhelms process quality (communication, diligence, advocacy).

Like legal services reviews, you must separate transaction outcome from agent process. Good agents close deals. Great agents close deals while keeping clients informed, protecting their interests, and demonstrating competence.

2. Specialisation creates fundamentally different work

An agent handling single-family home sales operates in a different world than an agent managing multi-unit rental properties or commercial real estate. Reviews of residential agents tell you nothing about commercial agent quality. Market specialisation matters enormously.

3. Timing is inflexible

Buyers and sellers operate on rigid timelines (moving dates, market windows, school years, job starts). An agent who is slower than competitors or misses deadlines creates direct harm. Unlike SaaS reviews where users can tolerate imperfection for months, real estate clients are time-bound. Reviews will reflect this urgency.

4. Trust is binary

Buyers and sellers are signing documents that commit them to hundreds of thousands of dollars. One missing inspection, one failed disclosure, one predatory negotiation damages trust irreparably. Reviews often mention trust directly: "I trusted her," or "I never felt he had my back."

5. Relationship ends after transaction

Unlike subscription software where customers interact with a provider repeatedly, real estate clients typically use an agent once every 5-10 years (moving). A review reflects a single transaction, not an ongoing relationship. High satisfaction does not mean loyalty; low satisfaction often means they will not return and will warn friends.

The real estate review ecosystem

Google (Google My Business, Google Maps)

Signal quality: HIGH - Broad audience (buyers, sellers, renters) - Authentic (clients less performing for marketing optics) - Location-based visibility - Direct response mechanism

Bias: Concentrated among clients who actively leave reviews (very satisfied or angry). Underrepresents silent majority. Visibility biased toward agents in concentrated markets (urban agents accumulate more reviews than rural agents with same quality).

Zillow (Zillow, Trulia)

Signal quality: MEDIUM-HIGH - Real estate-specific audience - Verified transaction connections (for some reviews) - Specialisation by property type - Pricing transparency for historical transactions

Bias: Tech-forward buyers and sellers overrepresented. Older, less-connected agents underrepresented. Volume skews toward popular agents with existing client bases (survivorship bias).

Yelp

Signal quality: MEDIUM - Broad audience - Robust review filtering and fake detection - Community reputation - Historical archive

Bias: Real estate-specific feedback less common than restaurant/service reviews. Yelp users skew younger and urban. Less helpful for evaluating rural or niche real estate markets.

Agent-specific platforms (Realogy, RE/MAX internal ratings)

Signal quality: MEDIUM - Company incentive to track quality - Internal performance data - Peer feedback components - Limited public visibility

Bias: Company incentive to suppress negative feedback or require login access. Less transparent than public platforms.

MLS boards and association directories

Signal quality: LOW (for service quality) - License verification and compliance - Disciplinary history (if searchable) - Minimal performance data - No client feedback mechanism

Use: Verification tool, not quality signal.

Systematic real estate review analysis framework

Step 1: Define your analysis scope

Are you evaluating: - An individual agent (qualitative, personal service quality) - A brokerage firm (team coordination, compliance, scale) - A market segment (residential vs commercial vs rental property management) - An agent type (buyer's agent vs seller's agent vs property manager)

See What Your Reviews Really Say

Paste any product URL and get an AI-powered SWOT analysis in under 60 seconds.

Try It Free →Each requires different review filtering:

| Scope | Review sources | Key metrics | Timeframe |

|---|---|---|---|

| Individual agent | Google, Zillow, agent website | Communication, responsiveness, transaction speed | Last 12 months (minimum 5-10 reviews) |

| Brokerage firm | Google, Zillow, Better Business Bureau | Compliance, brand consistency, agent quality variance | Last 24 months (minimum 15-20 reviews) |

| Market segment | Zillow by property type filters | Price alignment, inspection frequency, holdback rate | Last 24 months |

| Agent type | Google/Zillow seller agent vs buyer agent separators | Dual-agency conflicts, negotiation outcomes, price | Last 12 months |

Step 2: Review collection and contextual enrichment

Gather reviews and add context:

| Data point | How to collect | Why it matters |

|---|---|---|

| Review text | Platform scraping (Google, Zillow, Yelp) | Sentiment and process detail |

| Star rating | Platform API or manual | Overall satisfaction signal |

| Transaction date | Review date, home closing records (public) | Timing between transaction and review |

| Property details | MLS records, Zillow history | Market context (property value, market conditions) |

| Listing vs buyer agent | Review text, MLS role | Different responsibilities and conflicts |

| Market conditions | Zip code market data at transaction date | Hot market vs down market expectations |

| Agent response | Platform tracking | Responsiveness to feedback |

Step 3: Transaction outcome separation

Classify reviews as:

Transaction successful + process excellent: "Smooth closing, agent explained each step, handled all paperwork professionally, closed on time, and continues to check in. Exceptional service." - Signal: High agent quality - Action: Model this agent, highlight in marketing

Transaction successful + process problematic: "Got the house at a good price, but the agent was hard to reach for three weeks, inspection report had a typo that could have caused problems, and we only learned about the Foundation issue from the home inspector, not the agent." - Signal: Lucky outcome masks poor diligence. Agent stumbled into success. - Action: Process improvement required (diligence, communication, client advocacy)

Transaction failed or difficult + process excellent: "Market moved before we could close, ultimately did not buy. But the agent was transparent about timing risk, helped us understand our options, and we appreciated the professionalism. We will use them again." - Signal: High agent quality, appropriate expectation-setting - Action: Neutral feedback (market outcome, not agent quality)

Transaction failed + process problematic: "We lost the bidding war for our dream home, and the agent never prepared us for competing offers, never suggested escalation clause, and was unreachable during the critical offer period. Felt abandoned." - Signal: Poor diligence + market loss = high dissatisfaction, reputational damage - Action: Process and competence improvement required

Group reviews by outcome-process quadrant. An agent who loses transactions due to market conditions (competitive market, overpriced sellers, motivated buyers) is not a quality problem. An agent who loses transactions due to poor negotiation, missed deadlines, or inadequate diligence is a problem.

Step 4: Extract process quality dimensions

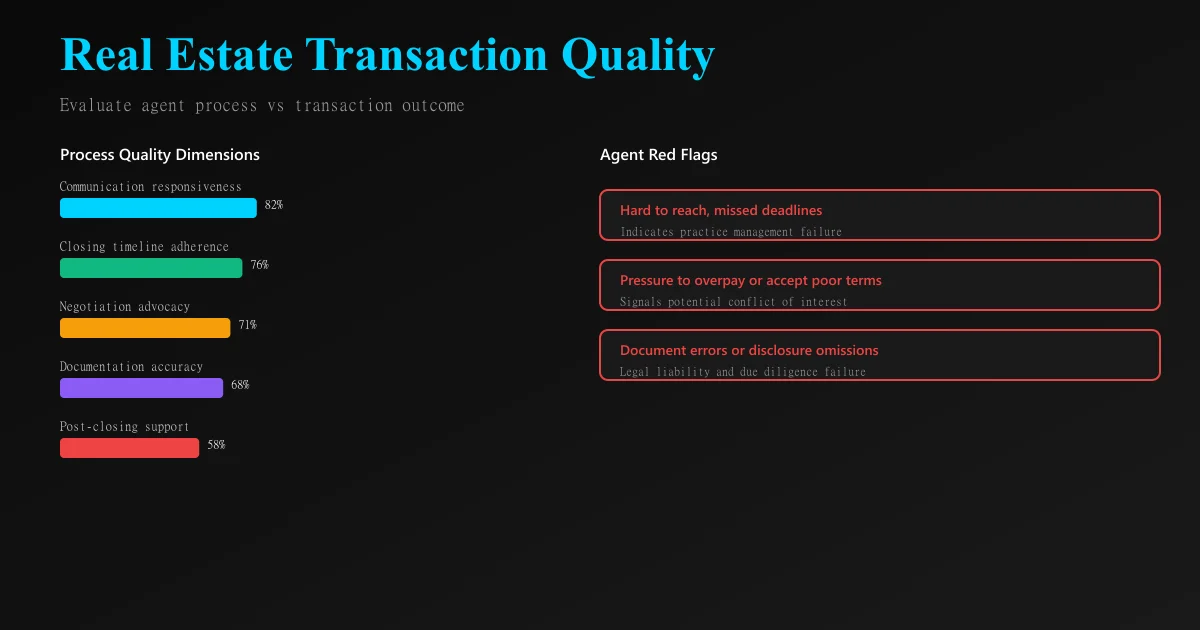

Within process-focused feedback, identify patterns:

| Process dimension | Positive signals | Red flags |

|---|---|---|

| Communication | "Responsive," "always available," "answered every question," "called with updates" | "Hard to reach," "ghosted," "long delays," "never returned calls" |

| Competence | "Negotiated a great deal," "explained market conditions," "found inspection issues," "knew the area" | "Didn't understand market," "missed obvious problems," "poor negotiation," "overpriced listing" |

| Diligence | "Thorough inspection coordination," "reviewed contract carefully," "caught disclosure issue," "explained all terms" | "Rushed closing," "missed deadline," "didn't explain terms," "contract had errors" |

| Representation | "Fought for my interests," "negotiated hard," "acted like my advocate," "didn't pressure me" | "Pressure to overpay," "dual agency conflict," "more interested in closing fee," "didn't advocate" |

| Documentation | "Paperwork handled seamlessly," "clear explanations of disclosures," "no surprises at closing" | "Documents were wrong," "closing disclosure had errors," "hidden costs," "surprise fees" |

For each dimension, calculate: - Frequency (how many reviews mention this factor) - Polarity ratio (positive % vs negative % for this dimension) - Trend (improving or declining quarter-over-quarter)

Step 5: Red flag assessment

Certain patterns warrant escalation:

| Red flag | Severity | Indication |

|---|---|---|

| Repeated communication unresponsiveness | HIGH | Process failure, client relationship collapse |

| Disclosure document errors or omissions | CRITICAL | Legal liability, ethical violation risk |

| Dual agency conflicts in reviews | CRITICAL | Potential conflict of interest violations |

| Pressure to overpay or accept poor terms | HIGH | Ethical concern, fiduciary violation |

| Missed deadlines or closing delays | HIGH | Competence or process failure |

| Contract errors or traps | CRITICAL | Due diligence failure, legal liability |

| Discrimination mentions | CRITICAL | Fair Housing Act violation, legal liability |

Real estate vs professional services vs SaaS: review analysis comparison

Real estate review analysis is most similar to legal services reviews — outcome bias dominates, specialisation matters, and one transaction resolves the relationship.

Key differences:

| Property | Legal | Real estate | SaaS |

|---|---|---|---|

| Outcome bias | Severe | Severe | Low (continuous service) |

| Specialisation | Very high | High | Medium |

| Review volume per professional | Low (10-20/year) | Low-medium (20-50/year) | Medium-high (50+/year) |

| Feedback detail | Confidentiality limited | Market-based | Feature-specific |

| Satisfaction drivers | Process quality | Process quality | Product quality |

Building a real estate feedback loop

For agents: client satisfaction tracking

Track monthly: - Response time score (replied within 24 hours / total interactions) - Closing timeline score (closed on schedule / total transactions) - Client communication satisfaction (felt informed and updated / total clients) - Negotiation perception (felt agent advocated for me / total buyers/sellers) - Transaction success rate (transaction closed / total offers made) — with note that market conditions affect this more than agent skill

Compare against agent cohort benchmarks. An agent with 95% closing rate in a hot market is performing above average. The same rate in a down market is below average.

For brokerage firms: compliance and quality monitoring

Track quarterly: - Average agent rating by market segment (residential, commercial, property management) - Client satisfaction by transaction type (buyer representation, seller representation, investment property) - Compliance red flags (disclosure errors, deadline misses, documented conflicts of interest) - Response rate to client feedback (agents responding to reviews, addressing concerns)

Common real estate review analysis mistakes

Mistake 1: Treating all negative reviews as agent defects Market conditions, seller intransigence, buyer unrealistic expectations, and market competition are beyond agent control. A client who loses a bidding war may blame their agent for "not trying hard enough" when the real issue is a hot market with multiple competing offers. Read the context before concluding agent quality is poor.

Mistake 2: Ignoring dual agency disclosures and conflicts Agents representing both buyer and seller in the same transaction have an inherent conflict: they profit equally regardless of who gets the better deal. Reviews mentioning "the agent worked for both sides," "felt like the agent was more interested in the other side," or "pressure to accept unfair terms" may indicate unmanaged dual agency. Verify the agent's disclosures.

Mistake 3: Overweighting recent reviews without seasonal context Real estate markets are seasonal. Summer shows sell faster. Winter showings are slower. An agent with 3 recent negative reviews during winter market slowdown might be victim of market timing, not incompetence. Review 12-month trends, not 3-month trends.

Mistake 4: Failing to cross-reference with public transaction records Zillow, MLS, and county records are public. Cross-check review claims ("agent negotiated a great deal") with actual transaction prices and local comp data. An agent claiming "I negotiated 15% above asking" in a hot market is unremarkable. In a slow market, it is exceptional. Context matters.

Mistake 5: Treating property manager reviews the same as agent reviews Property managers are ongoing service providers (unlike agents, who are one-time). Reviews of property managers should reflect satisfaction over a multi-year period, not a single transaction. A property manager with mostly positive reviews but one terrible experience indicates reliability. A single negative review of an agent can indicate a failed transaction.

Ready to try AI-powered review analysis?

Get 2 free SWOT reports per month. No credit card required.

Start FreeRelated Articles

Discord communities are invisible to traditional review platforms. Learn how to systematically extract and analyze member sentiment from channels to detect engagement churn, identify friction points, and drive community growth.

Luxury Brand Review Analysis: Understanding High-End Customer Expectations and FeedbackLuxury brands operate with different customer expectations. Learn how to analyze reviews on specialty platforms, separate outcome from process feedback, and detect quality deterioration in high-margin segments.

Review Sentiment Analysis as Churn Prediction: Using Reviews as Leading Indicators for Customer LossChurn prediction using usage metrics is reactive. Learn how to use review sentiment shifts as leading indicators that predict customer churn 30–90 days in advance, enabling proactive retention intervention.